Discover and explore top open-source AI tools and projects—updated daily.

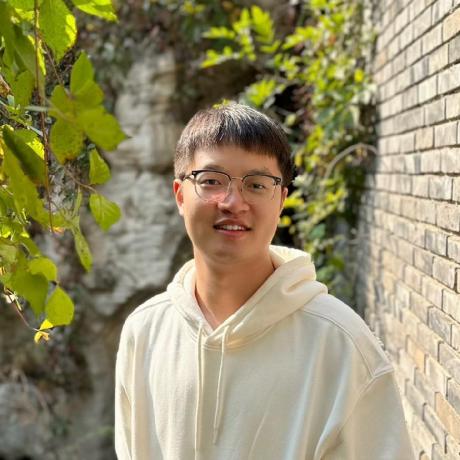

diffusion-4k by  zhang0jhon

zhang0jhon

Synthesize ultra-high-resolution images with latent diffusion models

Top 77.6% on SourcePulse

Diffusion-4K offers a framework for direct ultra-high-resolution image synthesis using latent diffusion models, targeting researchers and practitioners in generative AI. It addresses the lack of high-resolution benchmarks and introduces a wavelet-based fine-tuning method for enhanced detail synthesis, particularly with large-scale models like SD3-2B and Flux-12B.

How It Works

The framework introduces the Aesthetic-4K benchmark, a curated 4K dataset with GPT-4o-generated captions, and novel evaluation metrics (GLCM Score, Compression Ratio) alongside standard ones (FID, Aesthetics, CLIPScore). Its core technical contribution is a wavelet-based fine-tuning approach that enables direct training on photorealistic 4K images, improving detail preservation and synthesis quality in latent diffusion models.

Quick Start & Requirements

- Install:

pip install -r requirements.txt - Prerequisites: Requires pre-trained models (SD3-2B, Flux-12B) and the Aesthetic-4K dataset, which need to be downloaded separately. CUDA is implicitly required for diffusion models.

- Links: Aesthetic-4K dataset: huggingface/Aesthetic-4K, SC-VAE training code: sc-vae, Aesthetic-Train-V2: huggingface/Aesthetic-Train-V2.

Highlighted Details

- Introduces the Aesthetic-4K benchmark and GLCM Score/Compression Ratio metrics for evaluating ultra-high-resolution image synthesis.

- Proposes a wavelet-based fine-tuning method for direct 4K image training.

- Demonstrates effectiveness with large models like SD3-2B and Flux-12B.

- Provides example generation commands for resolutions up to 4096x3072.

Maintenance & Community

The project is associated with CVPR 2025 and has an arXiv paper. Links to related datasets and training code are provided. No specific community channels (Discord/Slack) or roadmap are mentioned in the README.

Licensing & Compatibility

The README does not explicitly state a license for the code or the model checkpoints. It acknowledges dependencies on Diffusers, Transformers, SD3, Flux, and CLIP+MLP Aesthetic Score Predictor, whose licenses would apply.

Limitations & Caveats

The project is presented as part of CVPR 2025 submissions, suggesting it may be research-oriented and potentially subject to changes. Explicit licensing information for the core Diffusion-4K components is missing, which could impact commercial use or integration into closed-source projects.

4 months ago

Inactive

ByteVisionLab

ByteVisionLab Huage001

Huage001 kmittle

kmittle zai-org

zai-org YangLing0818

YangLing0818 PRIS-CV

PRIS-CV luosiallen

luosiallen NVlabs

NVlabs Stability-AI

Stability-AI openai

openai CompVis

CompVis