Discover and explore top open-source AI tools and projects—updated daily.

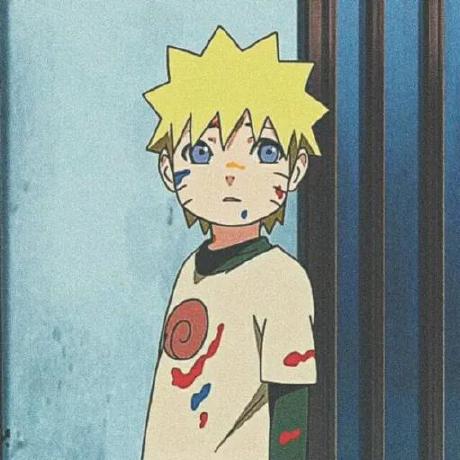

AnimeGamer by  TencentARC

TencentARC

Anime life simulation with next game state prediction

Top 80.0% on SourcePulse

AnimeGamer enables infinite anime life simulations by predicting and generating consistent multi-turn game states, including dynamic animation sequences and character attribute updates. It targets users interested in interactive storytelling and character-driven experiences, allowing them to direct anime characters through natural language commands.

How It Works

The system leverages Multimodal Large Language Models (MLLMs) to generate game states. It employs a three-phase training process: first, an encoder-decoder model with a diffusion-based decoder reconstructs videos conditioned on action intensity; second, an MLLM predicts subsequent game state representations from historical inputs; and third, the decoder is fine-tuned using the MLLM's predictions to enhance animation quality. This approach allows for consistent, context-aware video generation and character state evolution.

Quick Start & Requirements

- Install: Clone the repository, create a conda environment (

conda create -n animegamer python==3.10), activate it (conda activate animegamer), and install requirements (pip install -r requirements.txt). - Prerequisites: Requires checkpoints for AnimeGamer and Mistral-7B, and the 3D-VAE from CogvideoX, all to be placed in a

./checkpointsdirectory. - Gradio Demo: Run with

python app.py. Requires at least 24GB VRAM per GPU for a two-GPU setup, or 60GB VRAM for a single GPU (setLOW_VRAM_VERSION = False). - Inference: Use

python inference_MLLM.pyfor state generation andpython inference_Decoder.pyfor animation decoding. - Links: Paper, Gradio Demo

Highlighted Details

- Generates consistent multi-turn game states with dynamic animation shots and character attribute updates (stamina, social, entertainment).

- Allows cross-anime character interactions, e.g., Pazu from "Castle in the Sky" interacting with Qiqi from "Kiki's Delivery Service".

- Utilizes action-aware multimodal representations and a diffusion-based decoder for video generation.

- Released inference codes, model weights for specific anime, and a local Gradio demo.

Maintenance & Community

The project is associated with Tencent ARC Lab and City University of Hong Kong. Key components are based on CogvideoX and SEED-X. TODOs include releasing data processing and training codes, and weights for models trained on mixed anime datasets.

Licensing & Compatibility

The repository does not explicitly state a license in the README. The code is presented for research purposes, and commercial use would require clarification.

Limitations & Caveats

The project is in its early stages, with training codes and mixed-anime datasets not yet released. The Gradio demo has significant VRAM requirements (24GB per GPU or 60GB for single GPU).

1 year ago

Inactive

vargHQ

vargHQ dexhunter

dexhunter Anil-matcha

Anil-matcha LingGuoAI

LingGuoAI YouMind-OpenLab

YouMind-OpenLab alibaba

alibaba op7418

op7418 kuangdd2024

kuangdd2024 twwch

twwch freestylefly

freestylefly alecm20

alecm20 ddean2009

ddean2009