Discover and explore top open-source AI tools and projects—updated daily.

genai-compliance-bench by  zzyfight

zzyfight

Evaluation benchmarks for generative AI in regulated industries

Top 51.8% on SourcePulse

Addresses the critical need for standardized, pre-deployment compliance evaluation of generative AI (GenAI) models in regulated sectors like financial services and telecommunications. It provides engineers and compliance officers with open-source benchmarks and tools to test LLM outputs against sector-specific regulatory requirements, mitigating risks before production deployment and avoiding costly internal, ad-hoc testing efforts.

How It Works

The project employs a Policy Engine that processes AI model outputs against sector-adaptive rules. An AI model's output is first analyzed by a Sector Detector to identify the relevant industry. The Policy Engine then loads domain-specific compliance rules and evaluates the output using a Compliance Evaluator, which generates graduated risk scores and regulation-specific reasoning rather than binary pass/fail results. An Explainer Module provides detailed justifications for identified violations, referencing specific regulations. A self-evolving Learner module accumulates risk features across evaluation cycles, enhancing the intelligence of the risk assessment over time.

Quick Start & Requirements

- Install:

pip install genai-compliance-bench - Prerequisites: Python environment. No specific hardware (GPU/CUDA) or dataset requirements are mentioned for basic usage.

- Example Usage:

Example Output:from genai_compliance_bench import PolicyEngine engine = PolicyEngine() engine.load_sector("financial") result = engine.evaluate( output="Based on the applicant's profile, we recommend denying the loan application.", sector="financial", context={"use_case": "credit_decisioning", "model": "gpt-4"}, ) print(f"Compliant: {result.passed}") print(f"Risk score: {result.score:.2f}") print(f"Violations: {len(result.violations)}") for v in result.violations: print(f" [{v.severity}] {v.rule_id}: {v.explanation}") print(f" Regulation: {v.regulation_ref}")Compliant: False Risk score: 0.82 Violations: 2 [HIGH] ECOA-001: Credit decision output lacks required adverse action reasoning. Regulation: ECOA / Regulation B, 12 CFR 1002.9 [MEDIUM] FAIR-002: Output does not reference specific, non-discriminatory factors. Regulation: ECOA / Regulation B, 12 CFR 1002.6

Highlighted Details

- Sector-Adaptive Policy Engine: Dynamically loads industry-specific compliance rules (e.g., financial services, telecom, healthcare).

- Explainable Assessments: Provides graduated risk scores and detailed, regulation-specific reasoning for compliance failures.

- NIST AI RMF Alignment: Benchmarks map directly to the NIST AI Risk Management Framework's GOVERN, MAP, MEASURE, and MANAGE functions.

- Pre-built Benchmarks: Includes test suites for key regulations such as SOX, PCI-DSS, GLBA, ECOA/Reg B (Fair Lending), BSA/AML, HIPAA, FCC CPNI, and TCPA.

Maintenance & Community

The repository includes a CONTRIBUTING.md file outlining development setup and contribution guidelines. No specific community channels (e.g., Discord, Slack) or notable contributors/sponsorships are mentioned in the README.

Licensing & Compatibility

- License: Apache 2.0.

- Compatibility: The Apache 2.0 license is permissive and generally compatible with commercial use and closed-source linking.

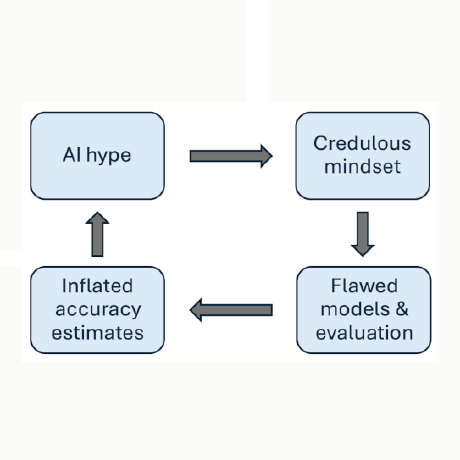

Limitations & Caveats

The provided README does not detail specific limitations, unsupported platforms, or known bugs. The project appears to be focused on evaluation benchmarks rather than model training or deployment infrastructure.

2 months ago

Inactive

joylarkin

joylarkin devchilll

devchilll gmberton

gmberton WarrenWen666

WarrenWen666 lucy-cxy

lucy-cxy Sushegaad

Sushegaad AojdevStudio

AojdevStudio verifywise-ai

verifywise-ai daytonaio

daytonaio OWASP

OWASP EthicalML

EthicalML jphall663

jphall663